Extracts from the Department of Basic Education, Report of Annual National Assessments 2011, June 28 2011

Executive Summary

2011 is likely to be considered a watershed year in the struggle to ensure that the poor in South Africa enjoy quality basic education. This year has seen the implementation of two significant national interventions: the introduction of standardised national workbooks for Grades 1 to 6 aimed at improving classroom practices and the first fully-fledged application of the Annual National Assessments (ANA) programme with a focus on learning in Grades 1 to 6. This report deals with the second of these two interventions.

Specifically, the report describes the successes and challenges experienced in the implementation of the 2011 wave of ANA and provides key findings emerging from the data collected. Whilst this report provides important information that must inform the focus of current actions by the education departments, school principals, teachers, parents and other stakeholders, this report constitutes only a part of the overall 2011 ANA process, which includes additional reports and activities emerging from the data that have been collected and the experiences gained.

The 2011 wave of ANA is the outcome of many years of capacity building and learning in the area of standardized assessments. ANA is far more ambitious and is designed to have a much greater impact than its predecessor, the Systemic Evaluation programme, run in 2001, 2004 and 2007 and involving in each run just one grade and a sample of between 35 000 and 55 000 learners.

In contrast, ANA 2011 involved the testing of all learners in public schools in Grades 2 to 7 during February 2011, the focus being on the levels of learner performance in the previous year, in other words in Grades 1 to 6. This means that almost six million learners were tested.

In 2008 and 2009 trial runs of ANA were conducted, largely with a focus on exposing teachers to better

assessment practices. However, 2011 is the first year in which ANA produced sufficiently standardised data in order to allow for the analysis provided in this report and the generation of tools that will enable provinces and districts to target the right support to schools at the Foundation and Intermediate Phases (Grades 1 to 6) in a more effective manner.

ANA 2011 moreover draws from experiences in a number of international assessment programmes in which South Africa has actively participated in during the last decade. These include the regional Southern and East Africa Consortium for Monitoring Education Quality (SACMEQ) programme and the global Progress in International Reading Literacy Study (PIRLS) and Trends in International Mathematics and Science Studies (TIMSS) programmes.

ANA 2011 involved both ‘universal ANA' and ‘verification ANA'. In universal ANA all learners in Grades 2 to 7 were tested in both languages and mathematics. Verification ANA involved applying more rigorous procedures to a sample of around 1 800 schools offering Grades 3 or 6 in order to verify results emerging from universal ANA. Specifically, in verification ANA external controls in the test administration process were more rigorous and test scripts from each school were re-marked after the initial marking by teachers.

The purpose of ANA is to make a decisive contribution towards better learning in schools. Under-performance in schools, especially schools serving the poorest communities, is a widely acknowledged problem. Clearly, ANA cannot bring about improvements on its own and should be seen as part of the wider range of interventions undertaken by Government to promote quality schooling. Part of the purpose of ANA is to provide the necessary information to planners, from the Minister all the way to teachers who need to plan their work in the classroom. At the national level, ANA is a vital instrument intended to measure progress towards the targets set by President Zuma in his 2009 State of the Nation address. These targets state that by 2014, 60% of learners in Grades 3, 6 and 9 should perform at an acceptable level in languages and mathematics. However, the information obtained from ANA is needed for many other purposes at the national level. For instance, it is needed to diagnose in which specific areas teachers need most support and how the learning materials used by learners need to be improved.

International and local experiences point to the importance of ensuring that programmes such as ANA are viewed not only as measurement activities, but also as programmes that encourage action that will lead to better practices. Action Plan to 2014: Towards the realisation of Schooling 2025, released by the Minister of Basic Education in 2010, highlights four such areas of action:

ANA should encourage teachers to assess learners using appropriate standards and methods. This has been a focus of ANA since the trial runs of 2008 and 2009. Evidence indicates that ANA has indeed brought about better assessment practices in the classroom, partly by encouraging district offices and provincial departments to review their own initiatives aimed at supporting teachers in this area.

ANA should encourage better targeting of support to schools. District offices, which have been integrally involved in the 2008 and 2009 trial runs, have used ANA results to produce a better picture of what support to provide to which schools. The 2011 ANA data allow the Department of Basic Education (DBE) to assist districts and provinces in a more direct way in this area. Specifically, the 2011 data will be used to produce standard reports for districts that will encourage a more effective approach to the targeting of schools for support purposes.

ANA should encourage the celebration of success in schools. By providing schools with a clearer picture of how well they perform in comparison to schools facing similar socio-economic challenges, schools that perform well will know when this is the case and schools which do not will have a clearer idea of what is possible and who they could learn from. Government does not support the use of ANA for the purposes of ‘naming and shaming' those who do not perform well. At the same time, good performance should be recognised and lauded.

ANA should encourage greater parent involvement in improving the learning process. During 2011 some schools have used ANA as an opportunity to get parents more involved in academic improvement.

Specifically, ANA can provide parents on the School Governing Body, as well as parents in general, with a better picture of the grades and subjects where special attention is needed. This can assist both efforts in the school and efforts in the home aimed at ensuring that learning occurs as it should.

The data analysis provided in this report is based on the data collected from the approximately 1 800 schools forming part of verification ANA. Key findings discussed in this report include the following:

Above all, the quality of basic education is still well below what it should be. The percentage of learners reaching at least a ‘partially achieved' level of performance varies from 30% to 47%, depending on the grade and subject considered. The percentage of learners reaching the ‘achieved' level of performance varies from 12% to 31%. Even the best provincial figure in this regard, 46% for Grade 3 literacy in Western Cape, is well below what can be considered acceptable. These figures reflect the magnitude of the challenge still facing the sector. However, they also reflect the high standards that we have set for ourselves. The figures are not very different from those of other countries with similar assessment systems and similar aspirations.

Contrary to the expectations of many, the standards that teachers set when they mark are on average the correct ones, meaning that provincial and national results based on marks given by teachers do not differ greatly from results based on external marking. However, there is a significant minority of teachers who give marks that are either too high or too low. This emphasises the need to strengthen support to teachers in the use of appropriate standards in assessing learners.

The analysis confirms that the greatest need for support lies in quintiles 1 and 2, quintiles which cover largely rural and the poorest communities. On the positive side, in all provinces there are schools within these quintiles which can be considered to be showing promise and which can provide guidance both to other schools and to district officials in understanding what practices contribute to better teaching and learning.

The results provided in this report do not point towards the presence of the critical upward turn needed to attain the 60% targets referred to above. There are however some indications that efforts that have gone into supporting schools are succeeding. For instance, recent provincial efforts in Eastern Cape and KwaZulu-Natal to strengthen teacher capacity to assess learners are likely to explain some of the apparent improvements in these provinces. However, as explained in the report, it would be dangerous to read too much into the differences between the 2011 ANA results and results from earlier assessments, or even the differences between provinces in 2011 ANA. Importantly, this report focuses on aggregate trends only and does not include an analysis of trends at the level of individual test items, or test questions. The DBE is currently conducting this latter analysis and the results from this will provide an indication of whether upward or downward trends over time can be observed with respect to specific skills, such as multiplication or fractions in the case of mathematics.

Though the challenges for the schooling system remain great, the 2011 wave of ANA provides a basis for optimism. Both the process of 2011 ANA and the information obtained from this process represent a basis for improvement that did not exist previously. Schools and the education departments have gained important experiences in better assessment and, through this, a better focus on what must improve. The unprecedented step of providing all Grades 1 to 6 learners with national workbooks in 2011 has, according to preliminary reports, shifted classroom practices in the right direction. The 2012 wave of ANA, to be conducted early in the 2012 school year, will serve as a critical instrument with which to monitor the degree to which national workbooks and other interventions, such as the streamlining of the national curriculum, have had an impact on learning. The 2011 wave of ANA has provided a wealth of experience in how to conduct a programme of this nature in a way that contributes to quality education. Lessons learnt in 2011, which are discussed in this report, will inform the implementation of the 2012 wave of ANA.

***

Provincial ANA averages in a historical context

If one examines how well provinces performed relative to each other in 2011 ANA and compares this to the provincial averages derived from earlier assessments in the 2004 to 2007 period, there is considerable consistency, in particular as far as the relative positions of Free State, Gauteng, Northern Cape and Western Cape are concerned. Eastern Cape's Grade 3 performance according to ANA 2011 is considerably higher than one would expect, given this province's performance in earlier assaessments. To some extent, the same can be said of KwaZulu-Natal. The results suggest that whilst ANA provides relatively accurate provincial pictures of learner performance with respect to at least half of the tests, there is room for more standardisation in the way ANA is implemented.

***

The percentage of learners performing at specific levels

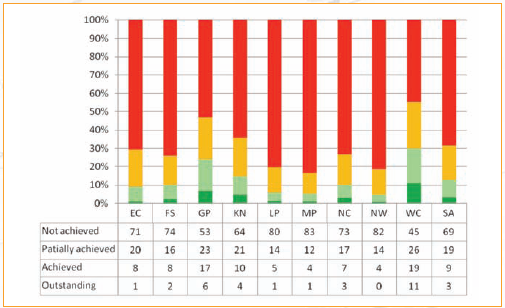

In all four of the tests examined in this report, fewer than half of all learners in the country perform at a level that indicates that they have at least partially achieved the competencies specified in the curriculum. In Grade 6, the results indicate that only around 30% of learners fall into this category. At the top end, too few learners are able to achieve outstanding results. For instance, only 3% of learners in Grade 6 mathematics can be considered outstanding.

The ANA tests and marking memoranda are designed to produce the following correspondence between the percentage mark and descriptions of achievement.

Table 9: Levels of achievement

|

Level 1 |

Not achieved |

Less than 35% |

|

Level 2 |

Partially achieved |

At least 35% but less than 50% |

|

Level 3 |

Achieved |

At least 50% but less than 70% |

|

Level 4 |

Outstanding |

At least 70% |

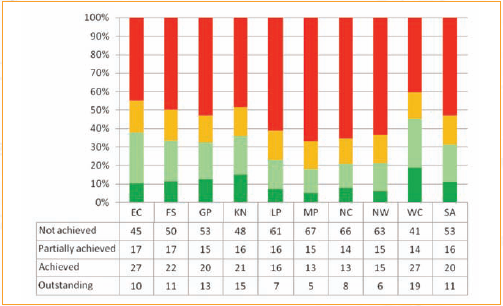

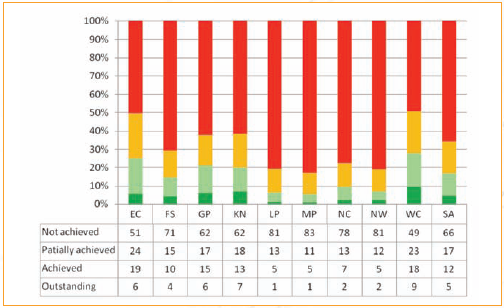

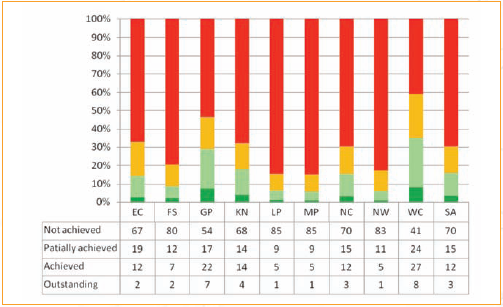

The next four graphs illustrate the distribution of learners across the four achievement levels, with breakdowns by province. Given the fact that the data are from a sample, it is important to keep in mind the confidence intervals of the statistics. At the national level, each statistic, for instance that 11% of learners should have achieved an outstanding level of performance in Grade 3 literacy, is subject to a confidence interval of around 5 percentage points.

In other words, we can be 95% certain that the true national statistic for outstanding performance for Grade 3 literacy lies between about 8.5% and 13.5%. The confidence intervals for the provincial statistics are around twice as large, in other words around 10 percentage points. A critical statistic is the percentage of learners achieving at levels 2, 3 or 4, meaning that they have achieved at least a reasonable part of the knowledge and skills they should have achieved by their grade.

In Grade 3 literacy, this statistic was 47% at the national level. This is similar to the corresponding statistic found in the 2007 Grade 3 Systemic Evaluation, which stood at 48%. However, as has been emphasised above, comparisons between ANA 2011 statistics and previous assessment results need to be undertaken with much care, for a variety of reasons. The key overall finding is that in 2011, learner performance continued to be well below what it should be, especially for the children of the poorest and most disadvantaged South Africans.

Figure 11: Performance in Grade 3 literacy by level

In Grade 3 numeracy, 34% of learners attained a level of performance that represented at least partial achievement. The corresponding statistic from the 2007 Systemic Evaluation was 43%. The large discrepancy between the two figures highlights the need for a more in-depth item-level analysis to examine differences in performance with respect to similarly difficult questions, and the degree to which the overall test in ANA 2011 was overly difficult, or the 2007 Systemic Evaluation test was too easy.

Figure 12: Performance in Grade 3 numeracy by level

The Grade 6 languages results point to 30% of learners nationally reaching at least the partially achieved level. This compares to 37% in the 2004 Grade 6 Systemic Evaluation.

Figure 13: Performance in Grade 6 languages by level

In Grade 6 mathematics, 31% of learners reached at least a partially achieved level of performance in ANA 2011. In the 2004 Systemic Evaluation, the corresponding statistic was 19%. Given the data limitations, it is not possible to conclude that this represents an unequivocal improvement in performance.

Figure 14: Performance in Grade 6 mathematics by level

Preliminary results from universal ANA also point to the persistence of major challenges in improving learner performance. For example, the percentage of learners reaching at least the partially achieved level in Grade 6 mathematics, which stood at 26% and 27% in Free State and Northern Cape according to verification ANA, stood at just 28% and 29% according to universal ANA.

Source: Department of Basic Education, June 28 2011. The full report can be accessed here - PDF.

Click here to sign up to receive our free daily headline email newsletter